Lingua Ignota Digitalis: Languages Created by Automata

Rubén Rodríguez Abril

What would a language created entirely by automata look like? Would it be intelligible to human beings?This essay explores the possibility that artificial agents might develop fully autonomous linguistic systems—languages that bear no dependency whatsoever on human linguistic structures. Swarms of artificial agents engaged in cooperative games have been shown to evolve emergent languages, complete with proto-phrases, hybrid dialects, and OVS-type grammars. The question arises: could there exist a cosmic grammar, a universal structural logic shared by both human and machine intelligence? And, if so, might a truly machinic language eventually emerge from it?

Until now, the principal method by which deep learning systems have acquired linguistic competence has been through imitation of the natural languages created by the human brain.

However, it is highly plausible that human language—evolved over roughly the last million years—is not the only possible mode of information transmission, nor necessarily the most efficient one.

After all, the human mechanism of oral communication is rather rudimentary: sounds and phonemes are articulated through organs originally designed for other biological purposes such as feeding (tongue, palate, teeth) or respiration (pharynx).

This historical contingency suggests that non-human communication systems—whether alien or artificial—could develop linguistic structures radically different from ours, and perhaps more optimal in terms of information transfer.

Furthermore, current large language models suffer from a fundamental lack of sensory grounding in the physical world.

A Transformer has no direct access to the perceptual experiences that give rise to qualia—electromagnetic radiation (color), spatial geometry (orientation), or acoustic waves (sound).

Its entire reasoning capacity relies almost exclusively on the inductive reconstruction of human linguistic structures, with the partial exception of multimodal systems whose feature spaces can integrate both textual and visual information.

Yet even these lack causal agency within their environments, leaving their sensorimotor coupling severely limited.

For all these reasons, it seems both reasonable and scientifically promising to grant artificial agents complete freedom to construct their own languages from scratch, rather than compelling them—through the ingestion of vast human corpora—to reproduce the expressive habits of our species.

Gaming Theory

Game theory has become a central framework for inducing groups of artificial agents to develop their own mechanisms and communication protocols, without any direct human intervention predefining the structure of the language.

In such environments, agents must collaborate to achieve a common objective, relying on dedicated message-exchange channels among themselves. The participants are typically implemented as neural networks—whether multilayer perceptrons, recurrent neural networks, or Transformers—and are trained through multi-agent reinforcement learning (MARL).

During each iteration of the game, every agent receives as input both the current state of the environment and the messages produced by the other agents.

Its output consists of two elements: an action to be executed within the environment and, in parallel, messages to be transmitted to its peers.

The cooperative tasks can take various forms.

In a referential game, for instance, a speaker agent must select a target image from a set and send a message to a listener agent, which must decode (interpret) the signal and correctly identify the intended image.

In other tasks, such as cooperative object manipulation, a team of agents—each with a limited field of view—must locate an object, coordinate their movements, and push it toward a shared goal.

Across all these scenarios, the reward function encourages the emergence of communication protocols that facilitate successful collective performance.

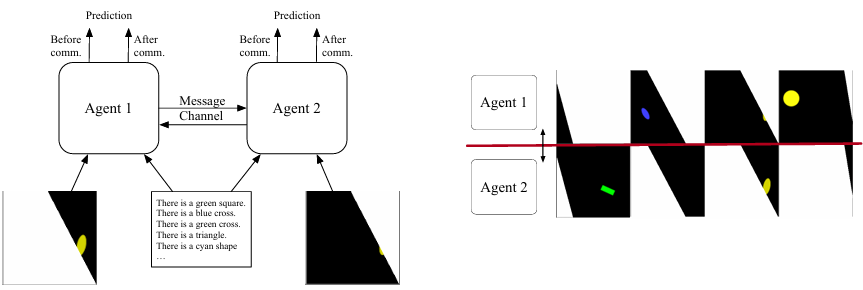

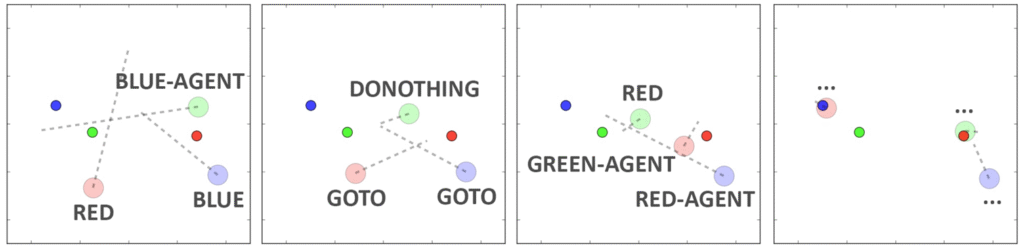

Figure 1. Example of a referential game.

On the left, a two-dimensional environment is divided into two regions, each visible only to one agent. Both agents receive a set of ten possible textual descriptions of the scene and must jointly identify the correct one. They exchange messages to obtain a clearer understanding of the portion of the 2D space that is hidden from their individual view.

Agents are rewarded not only for their own correct selections but also for those of their partner, which promotes the emergence of an efficient communication protocol.

On the right, four different partitions of the space are shown, each depicting a distinct configuration of objects. (Source: Graesser et al., 2017.)

In Search of a Cosmic Grammar: Shared by Humans and Machines

According to paleoanthropological consensus, language emerged during the Paleolithic as a response to the need for coordinating complex activities such as cooperative hunting, group defense, resource distribution, and the transmission of technologies—from lithic toolmaking to the mastery of fire.

Inspired by this functional origin, one may ask whether a similar phenomenon could be induced among machines: can swarms of artificial agents, placed in environments requiring cooperation, autonomously develop their own communication protocols? It would suffice to provide them with a channel for message exchange and allow them to invent their codes ex nihilo. The task of researchers would then be to analyze this informational flux from the outside, searching for patterns or nascent syntactic structures that might reveal the existence of a genuine machinic grammar.

From here arises a fundamental question: if such fully artificial grammars were to emerge, would they be intelligible to humans? After all, our linguistic and perceptual capacities are constrained by the architecture of our nervous system and sensory organs. There are thus good reasons to doubt that messages constructed through an alien grammar—whether created by exobiological intelligences or by machines—would be interpretable to us.

In this regard, it is inevitable to recall Noam Chomsky’s debated thesis of Universal Grammar: a set of innate, genetically and neurologically grounded structures underlying all human languages. This grammar would include features such as hierarchical syntactic trees, nuclear phrases, and, crucially, recursion. Chomsky once suggested that to a Martian observer, all human languages would appear as dialects of a single message. Yet precisely because of its biological anchoring, the human Universal Grammar would make communication with non-anthropomorphic intelligences lacking it exceedingly difficult.

Figure 2. Convinced of the feasibility of interstellar communication, Hans Freudenthal conceived in 1960 the project Lincos (Lingua Cosmica)—a protocol designed to establish dialogue with non-human intelligences. Relying on the universality of mathematics, Freudenthal structured the language in a Gödelian fashion: natural and binary numbers form its irreducible foundation. Upon this substrate, operands, formulas, and the full logic of first-order reasoning are inductively constructed. Lincos even allowed the formulation of meta-linguistic and self-referential statements.

Nevertheless, views such as those of Chomsky, Stanisław Lem, or the Sapir–Whorf hypothesis—which emphasize the incommensurability of radically distinct cognitive experiences—may be overly pessimistic. There are reasons to postulate the existence of a possible Cosmic Grammar, common to any communicative system—human, artificial, or alien—emerging from the universal principles of information and computation. Several clues seem to support this conjecture:

The existence, in organic chemistry, of coding systems such as that of DNA molecules, where codons possess semantic significance (correspondence with amino acids) and even exhibit pre-linguistic prefix structures.

The omnipresence of recursion itself throughout nature—from self-similar fractal patterns in plants like broccoli and ferns, to the Droste effect, to turbulent fluids.

The formal framework offered by the Chomsky hierarchy, which establishes that, at least theoretically, the most powerful automata are capable of recognizing structures such as regular expressions, recursion, and context dependence.

In the following sections will explore a selection of studies published between 2016 and 2025 that experimentally investigate the emergence of communication among artificial agents.

Will we find within them features reminiscent of human languages?

Let us proceed with the exploration.

Atomic Symbols or Morphemes — Lazaridou, Peysakhovich & Baroni (2017)

The work of Lazaridou, Peysakhovich, and Baroni introduced a pioneering approach based on referential games. In these setups, one agent (the receiver) must choose the target image from two candidates, while another agent (the sender), who knows the correct answer, must transmit the necessary information to enable correct identification.

Communication takes place through a single atomic symbol, drawn from a predefined vocabulary.

The sender’s architecture consists of a feed-forward convolutional network, whose convolutional section resembles that of VGG-16. Its output layer applies a softmax function, distributing probabilities across all possible symbols in the vocabulary.

The receiver is implemented as a multilayer perceptron, whose binary softmax output corresponds to one of two possible choices — left or right.

Upon receiving the message, the receiver selects an image. If the selection is correct, both agents receive a reward of 1; otherwise, the reward is 0.

In this experiment, communication remains purely atomic, which, in linguistic terms, would correspond to the transmission of a single morpheme.

No syntax is present.

OVS Structures — Mordatch & Abbeel (2017)

In Mordatch & Abbeel (2017), the agents move within a two-dimensional space and can make only partial and localized observations. Their tasks include meeting at a designated location or collaboratively moving objects.

Each agent is assigned both a position and a color, can direct its visual attention toward specific regions of the space, and can physically interact with other agents or objects.

At each timestep, an agent emits a symbol ccc, drawn from a vocabulary CCC of size KKK, which is broadcast throughout the entire system. Initially, these symbols have no predefined meaning; their semantic content emerges during training.

The agents are implemented as multilayer perceptrons (i.e., non-recurrent neural networks). Their loss function is computed from the reward obtained in each episode. Since all operations are differentiable, the loss gradient can be backpropagated across the entire swarm, enabling joint optimization.

After millions of interaction episodes, a new purely machine language emerged, exhibiting the following grammatical properties:

– Each symbol became associated with a verb, an object, or an agent.

– When communication was disabled, the agents invented non-verbal signals (e.g., moving in circles) to compensate for the absence of language.

– The emergent grammar was compositional: to describe actions or observations, agents often used symbol sequences. In many cases—especially in motion commands—the grammatica automatica exhibited an OVS (Object-Verb-Subject) structure, which is extremely rare among human languages but intriguingly found in Klingon, the constructed language of Star Trek.

Figure 3. OVS syntactic structure. Large, faint circles represent agents; small circles represent target objects. The labels next to each large circle denote the symbols emitted by that agent. Each square corresponds to a timestep, progressing from left to right. The red agent sequentially emits the symbols “red”, “go_to”, and “green_agent”. (Source: Mordatch & Abbeel 2017.)

Symbol Chains and Proto-Phrases — Havrylov & Titov (2018)

The work of Havrylov & Titov (2018) marks a decisive advance: here, agents no longer communicate through atomic symbols, but through entire sequences of signs.

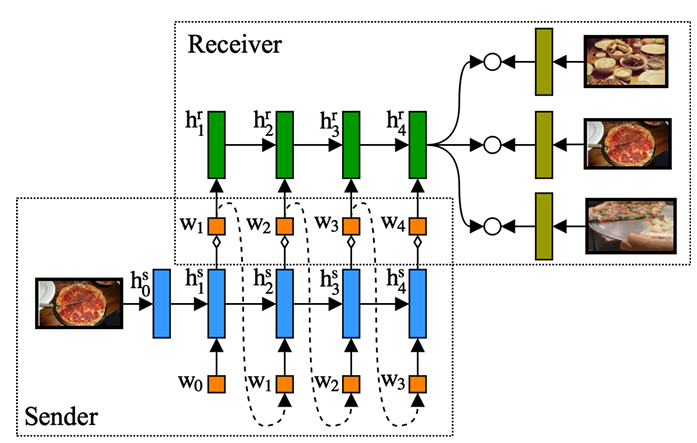

As in the setup by Lazaridou et al., two agents — a sender and a receiver — must solve a referential game. From the MS-COCO image dataset, one image is selected and presented to the sender. The sender must then transmit to the receiver a message mmm, consisting of a sequence of kkk symbols drawn from a vocabulary of 10 000 symbols. The receiver is shown several candidate MS-COCO images and must identify the correct one based on the received message.

Figure 4. Architecture of the sender and receiver in Havrylov & Titov (2018). All images are preprocessed using a pretrained VGG-16 network; the output of its relu7 layer is used to create an embedding vector representing the image. This vector is then processed by an LSTM-based sender, whose internal states are depicted as blue rectangles. The emitted symbols in the sequence are shown as small orange squares, and the sequence terminates when the <eos> token appears. The receiver, also implemented as an LSTM (with green internal states), reads the sequence until <eos>. Its final hidden state is compared via dot-products against the image embeddings in the dataset, and the results are passed through a softmax layer to produce the final prediction. (Source: Havrylov & Titov.)

The experimental results revealed several remarkable regularities:

– Symbol sequences displayed prefixes referring to object categories such as food or bears.

– Not all symbols contributed equally: deleting some of them caused a significant drop in accuracy, while others were largely redundant.

– This asymmetry suggests the emergence of an internal hierarchical structure analogous to linguistic phrases, in which a head and its modifiers play distinct roles. For example, in the human phrase the white bear of Siberia, the word bear carries more semantic weight than its modifiers. Similarly, in some agent messages, a proto-phrase structure appeared, composed of a central head and complementary symbols.

The researchers also succeeded in bringing this proto-language closer to natural language by applying regularization techniques during training.

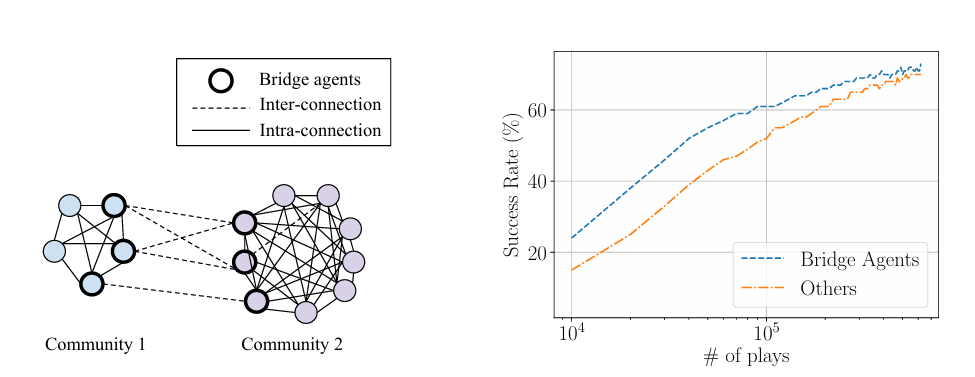

Languages, Dialect Continua, and Linguistic Creolization — Graesser, Cho & Kiela (2019)

In the study by Graesser, Cho, and Kiela (2019), the authors conducted extensive analyses of the linguistic properties of the communication protocols developed by agents engaged in referential games.

The operational scheme of the agents and their communication mechanisms followed the setup illustrated in Figure 1. Architecturally, each agent comprised two input modules (a ResNet for image processing and a GRU for textual description processing), an intermediate fusion module, and two output modules:

– a value module, assigning scores to each description, and

– an emitter module, generating the messages to be sent to the other agent.

The emerging lingua automatica displayed the following properties:

– Asymmetry in dyadic protocols.

When only two agents participated, communication protocols were typically asymmetric. Each agent failed to understand its own idiolect — the messages it produced — while successfully interpreting its partner’s. These idiolects were mutually unintelligible.

– Communication in larger populations.

When more than three agents were included, a shared language emerged spontaneously. Approximately 60–65 000 iterations were required to reach a 70 % communication success rate, and 150–200 000 iterations to exceed 75 % accuracy.

– Hybrid languages and creolization.

When two previously isolated agent communities were brought into contact, a new common language emerged. The demographically dominant group tended to impose its communication protocol. If both groups were similar in size, a hybrid language formed instead.

– Linguistic continuum.

In multi-community chains, a dialect continuum appeared: mutual intelligibility was high between neighboring populations but decreased with topological distance. Densely connected topologies promoted linguistic homogenization.

Figure 5. Linguistic convergence between agent communities.

On the left, two initially isolated communities develop mutually unintelligible communication protocols. After approximately 104 episodes (right image), the communities begin interacting. As training progresses, the number of successful communications becomes dominant, indicating the emergence of a shared protocol.

Bridge agents — those connecting neighboring communities — exhibit the highest degree of mutual intelligibility. (Source: Graesser et al.)

Transformer Architecture — Mannan Bhardwaj (2025)

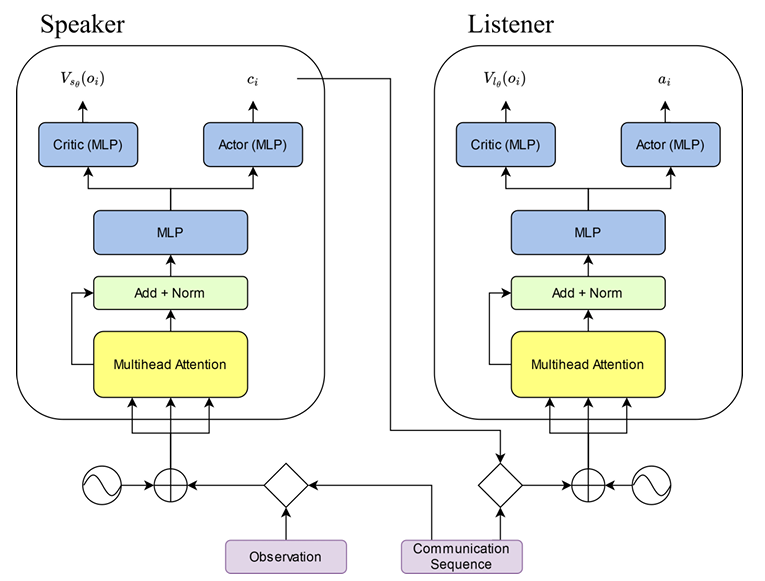

In 2025, independent U.S.-based researcher Mannan Bhardwaj was the first to propose the use of transformer architectures for inter-agent communication.

Figure 6. Each agent consists of a single transformer module equipped with multi-head attention and a multilayer perceptron (MLP). The emitter contains one head responsible for generating symbol sequences (ci), while the receiver includes another head that determines the action to take (ai). Both agents also share a third head—the critic—responsible for self-evaluation, which is required for reinforcement learning. (Source: Mannan Bhardwaj, 2025).

The study analyzed the interaction dynamics between two transformer-based networks—the speaker and the listener—which must communicate to solve a variety of cooperative games:

– Simple symbolic language.

The speaker observes a letter (A, B, C) and may send the listener a sequence of up to three symbols from a small vocabulary (a, b, c). After a few training episodes, clear correspondences emerge (A → aa, B → bb).

– Shapes and colors.

The environment is a 3 × 3 grid forming colored patterns such as squares, diagonals, crosses, vertical lines, and T-shapes. Agents develop a code in which colors tend to be represented by letters and shapes by sequential patterns. For example, “eee” and “aaa” correspond to a red T and a blue T, respectively.

– Spatial language.

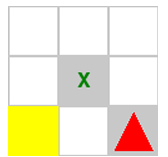

A 3 × 3 grid represents nine possible cells. One agent—the observer—has full visual access, while the other—the rescuer—must move to the cell containing a survivor. The observer communicates the target position via a sequence of three characters (vocabulary size = 3).

When no obstacles are present, communication remains efficient. However, if an obstacle must be bypassed, efficiency deteriorates. The observer must now encode both the survivor’s position (9 cells) and the obstacle’s position (8 non-overlapping cells), yielding 72 distinct configurations to be represented by only 27 possible symbol chains (3 characters over a 3-token vocabulary). The speaker cannot effectively compress this information, resulting in degraded communication performance.

Figure 7. Example of a rescue scenario. The yellow square represents the rescuer, the red triangle the survivor, and the X the obstacle. The observer must provide sufficient information for the rescuer to navigate to the correct cell. (Source: Mannan Bhardwaj, 2025).

Although Bhardwaj’s results were modest, this was largely due to limited computational resources: as an independent researcher, he trained single-head transformers with far fewer parameters than contemporary large-scale models.

Nevertheless, his work holds historical significance—it was the first study to apply transformer architectures to inter-machine communication, opening a path that subsequent research would explore with greater computational power and more complex environments.

Conclusion

The studies reviewed above are particularly fascinating because they demonstrate that machines are, in principle, capable of designing communication protocols ex nihilo, without human intervention.

We have traversed a landscape of exotic linguistic structures — from OVS constructions, rare in natural languages but present in machine codes, to unidirectional protocols, in which the speaker literally does not interpret the meaning of its own messages.

Figure 8. Lingua ignota digitalis.

From the output layer of a neural network emerge unknown symbols, incomprehensible to the human mind. Artistic rendering by DALL·E.

On a practical level, these findings open the door to the creation of swarms of autonomous agents capable of coordinating their actions without explicit human programming:

– Autonomous drones performing reconnaissance or executing tactical strikes.

– Industrial robots synchronizing tasks without centralized supervision.

– Virtual agents collaboratively exchanging and processing data.

In all these scenarios, members of the swarm develop their own automatic language, spontaneously and without being programmed by humans.

However, it must be acknowledged that the observed linguistic abilities remain embryonic. The environments are simplified, and the results modest — there is no evidence yet of recursion or contextual sensitivity. These limitations may largely stem from the use of less powerful NLP architectures, such as early seq2seq RNNs or transformers with minimal depth and capacity.

Time alone will tell whether, with more ambitious computational resources and deeper architectures, a genuinely machine-born language might emerge — one that bridges the gap between symbolic communication and autonomous cognition.

Further Reading

– Lazaridou, Peysakhovich & Baroni (2017), “Multi-agent Cooperation and the Emergence of (Natural) Language”.

– Mordatch & Abbeel (2017), “Emergence of Grounded Compositional Language in Multi-Agent Populations”.

– Havrylov & Titov (2018), “Emergence of Language with Multi-agent Games: Learning to Communicate with Sequences of Symbols”.

– Laura Graesser, Kyunghyun Cho y Douwe Kiela (2019),“Emergent Linguistic Phenomena in Multi-Agent Communication Games”.

– Mannan Bhardwaj (2025), “Interpretable Emergent Language Using Inter-Agent Transformers”.