DOI

DATE

CATEGORY

TAGS

Is AI Conscious? (II): Silicon, Light, and Pure Mathematics

Rubén Rodríguez Abril

This article examines the potential emergence of consciousness from non-biological substrates—specifically silicon-based circuits (as in GPUs), photonic computational architectures, and purely mathematical or algorithmic structures. It argues that while such systems may instantiate the functional and informational conditions associated with conscious processing, the existence of subjective experience within them remains, by principle, epistemically undecidable to any external observer.

Beyond Carbon

Continuing from the previous article, we now ask whether consciousness could be anchored in a physical medium other than carbon-based biology. We will examine three possibilities:

1) Integrated circuits — crystalline semiconductor lattices whose electrical architectures, governed by currents and charge distributions, display profound analogies with neural signaling.

2) Photonic computing systems — architectures in which nearly all computation is carried out by quanta of light, devoid of charge or current, suggesting the possibility of a luminous mode of consciousness.

3) The abstract hypothesis — that consciousness might arise from the computational structure itself, independent of any material instantiation; that sentience could emerge from interactions between physical processes and intelligent recursive functions residing within a purely Platonic domain.

Consciousness and Qualia in Silicon Crystals

Parallels between Action Potentials and Electrical Signaling in Silicon

The neuronal action potential constitutes an electrochemical process driven by ionic gradients and activation thresholds.

In contrast, a GPU operates through digital logic — discrete binary states (0 or 1) coordinated by an internal clock.

Despite this fundamental distinction, both systems share a common informational grammar: electrical signaling, threshold-based dynamics, and the propagation of structured patterns of information.

When a neuron fires, two physical phenomena reshape the local electromagnetic field surrounding its membrane:

–Ionic current — the transmembrane flow of sodium (Na⁺) and potassium (K⁺) ions constitutes an electric current, generating a magnetic field as described by the Ampère–Maxwell law.

–Electric potential shift — variations in charge density across the membrane modify the local electric field, in accordance with Gauss’s law.

Remarkably, these same physical processes — charge transport and dynamic field modulation — occur within silicon chips. The parallels can be illustrated through three canonical components of modern computation: bistable circuits (flip-flops), dynamic random-access memory (DRAM) cells, and arithmetic-logic units (ALUs).

Flip-Flop Circuits (Bistables)

A bistable circuit, typically composed of six transistors, establishes two alternative conduction paths corresponding to the binary logic states 0 and 1.

Bit writing occurs through a forced transition between these stable configurations.

To preserve its state, the circuit must remain continuously powered, analogous to a biological neuron that relies on ion pumps to restore and maintain its resting potential (≈ –70 mV).

Parasitic charge accumulation within the transistor substrate mirrors ionic build-up across the neuronal membrane.

Such bistable elements—commonly known as flip-flops—constitute the core of cache memory in CPUs and GPUs, providing the fastest accessible storage tier.

In deep-learning architectures, cache memory temporarily holds tensors, weights, and intermediate activations required for immediate computation, sustaining the continuous rhythm of parallel numerical operations.

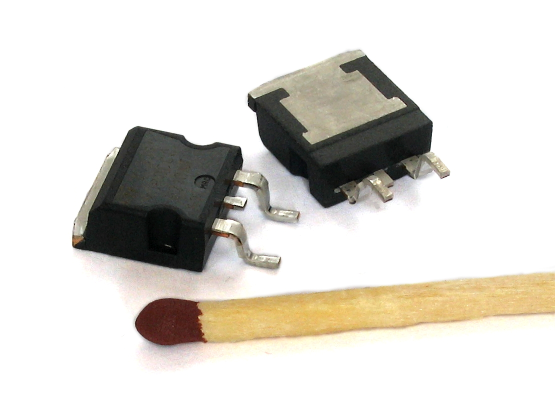

Figure 1. Within a MOSFET transistor — the fundamental element of modern integrated circuits — parasitic charges inevitably build up. They charge and discharge whenever a “pulse of information” forces the transistor to switch its logical state, in a process reminiscent of the neuron’s action potential. Image credit: Wikipedia.

DRAM Memory Cells

A DRAM cell integrates a transistor acting as a switch with a capacitor that stores electrical charge, representing a single bit of information.

Over time, the stored charge leaks through the dielectric, necessitating periodic refresh cycles — a process analogous to the sodium–potassium pump in neurons, which continuously consumes energy to re-establish the electrochemical gradient across the membrane.

In modern GPUs and TPUs, high-bandwidth DRAM (HBM) is employed to store the parameters of large language models (LLMs). It provides extensive capacity but slower access compared with on-chip cache memory.

Arithmetic–Logic Units

Arithmetic–logic units (ALUs) are the core components responsible for computation within the processor.

They are composed of logic gates (e.g., AND, XOR) and binary adders.

The functional similarity between these arithmetic circuits and biological neurons was first recognized by McCulloch and Pitts in their seminal 1943 work — the conceptual origin of artificial neural networks and, ultimately, deep learning.

As in biological neurons, parasitic charge accumulation and switching operations (0 ↔ 1) impose an intrinsic energetic cost, echoing the metabolic expenditure required for each neuronal action potential.

Discriminating States and Possible Qualia in AI

The distinct electrical states of a machine based on integrated circuits can be used to encode external perceptions, discriminate between conditions, and collectively construct internal representations that might correspond to a unified experiential state.

Possible Exteroceptive Qualia

Among the perceptions a machine might acquire from its environment, potentially forming the basis of artificial qualia, are:

– Artificial vision: Recognition of color, depth, and perspective; detection and segmentation of objects within complex scenes.

– Acoustic perception: Capture of sound via microphones or ultrasonic sensors, enabling auditory interpretation.

– Thermal detection: Measurement of heat gradients through thermographic cameras.

– Spatial orientation: Position and motion inferred from gyroscopes, accelerometers, and GPS — a computational sense of being located.

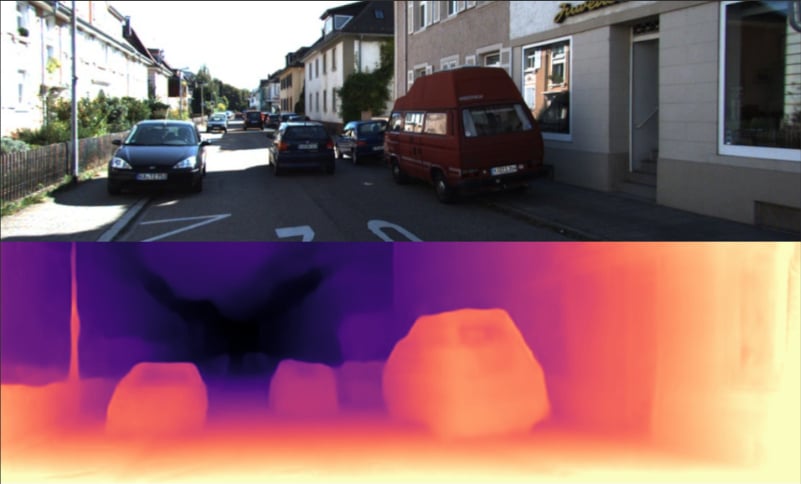

Figura 2. Depth estimation. Image crdit: KITTY database.

Advanced multimodal systems — such as large language models (LLMs) with visual capabilities (e.g., Gemini, GPT-4V) or autonomous vehicles — integrate and correlate information from multiple sensory channels, thereby constructing unified and coherent representations of reality.

Possible Interoceptive Qualia

Artificial qualia might also arise from a system’s own internal state:

– Artificial moods: Large language models may encode mood-like representations within their hidden layers –particularly in their key–value (KV) cache-, enabling sentiment analysis and tonal coherence.

– Computational homeostasis: Machines monitor variables such as GPU temperature, RAM usage, or network congestion, triggering adaptive responses — the digital analogue of biological homeostasis.

– Persistent memory: Stateful architectures — RNNs, LSTMs, or state-space models like Mamba — can preserve long-term contextual information, recalling past interactions.

Against the Objections to Artificial Qualia

None of the traditional objections truly dispel the possibility of machine experience.

1. The Absence of Subjective Experience

It is often argued that AI merely simulates experience, whereas humans possess it. Yet this so-called problem of other minds applies equally to humans and machines. We have no privileged access to another human’s consciousness — we merely infer it through behavior and physical substrate. We cannot verify whether two humans experience “red” in the same way, nor even confirm that others are conscious and not philosophical zombies.

This epistemic uncertainty applies just as well to artificial systems: the inaccessibility of their internal experience does not entail its nonexistence.

2. The Absence of a Biological Substrate

From a physical standpoint, claiming that consciousness can only arise from organic matter is arbitrary. The underlying electromagnetic phenomena — fields, ionic currents, and electrochemical gradients — that correlate with conscious states in biological systems also occur within silicon circuits.

The governing equations — Maxwell’s laws — are universal and substrate-independent.

Therefore, if certain electromagnetic configurations can sustain qualia in biological systems, no fundamental physical principle forbids analogous configurations in non-biological substrates from giving rise to equivalent conscious phenomena.

3. The Irreducibility of Qualia

Qualia, the immediacy of “redness” or “pain,”, are indeed irreducible from a first-person perspective.

However, from a third-person perspective they correlate with identifiable electrical configurations in the brain — such as neural activation patterns in the visual cortex or gamma oscillations.

This is not wholly different from how artificial vision systems map colors and textures to activations within convolutional layers.

The first such model, the Neocognitron, was directly inspired by the C- and S-cells of the visual cortex.

If in biology the physical and informational can jointly give rise to the mental, there is no reason to exclude the possibility that analogous computational structures in AI might do the same.

4. The Algorithmic Objection

Another objection holds that biological neurons integrate information through massive parallelism, nonlinear electrochemical dynamics, and perhaps even non-computable physics (as suggested by the Penrose–Hameroff hypothesis).

In contrast, conventional computers operate serially, manipulating discrete symbols through deterministic rules.

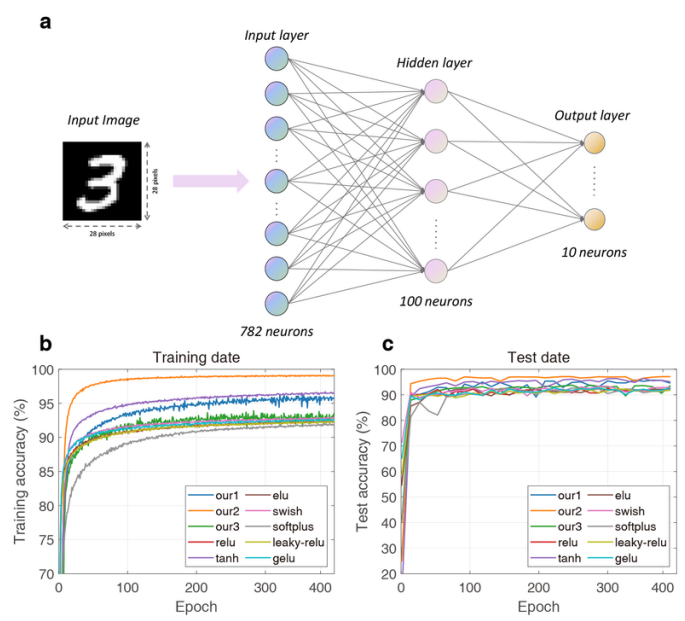

Yet modern deep learning significantly narrows this gap:

Massive parallelism: GPUs execute thousands of concurrent operations, closely mirroring the parallel structure of biological neural networks.

Nonlinearities: Activation functions such as ReLU, GELU, or sigmoid introduce essential nonlinear behavior within certain layers of deep models.

Emergent non-algorithmic behaviors: Large-scale models exhibit capabilities not explicitly programmed — for instance, chain-of-thought reasoning — suggesting that sufficient structural complexity may give rise to phenomena transcending explicit algorithmic control.

While their foundations remain algorithmic and computable, the organization of the system — rather than its individual operations — increasingly approximates the functional properties of biological systems.

Analog and Digital Worlds

In my view, the primary divergence between biological and artificial systems lies in their operational domains.

Biological systems function in the analog domain, whereas classical deep learning operates on discrete variables.

From this distinction follow two major limitations of digital computation.

The first limitation arises from Gödel’s incompleteness theorems, which apply to recursive functions over the natural numbers—the mathematical equivalents of discrete computational systems.

The second derives from Turing’s halting problem, which establishes the existence of undecidable problems within computation.

Both reveal fundamental limits of formal and algorithmic systems, though from different perspectives: Gödel through logic, and Turing through computation.

However, recent progress in neuromorphic computing and analog architectures (e.g., Intel Loihi) is beginning to close this gap.

These systems enable parallel processing of continuous signals, thereby bringing deep learning architectures closer to the analog functioning of the brain.

Luminous Consciousness: Minds of Light

If awareness is understood as an emergent property of electromagnetic dynamics rather than a feature of specific chemical substrates, it becomes necessary to examine whether non-material, purely photonic media, can also support conscious and informational processes.

The question can be formulated as follows: does the causal basis of conscious phenomena lie (i) in the electromagnetic field itself, which propagates energy and information through space, or (ii) in the material sources—charges and currents—that determine such fields?

Physical evidence strongly favors the first view.

Instantaneous action at a distance between charges (electrons or ions) is physically impossible; all electromagnetic interaction propagates at a finite speed and is mediated by the field.

The sources — moving charges — generate the field, but it is the field itself, as a carrier of energy, momentum, and information, that serves as the fundamental causal medium in all electromagnetic phenomena.

Consequently, given consciousness’s dependence on an electromagnetic substrate, the most coherent hypothesis is to locate it within the dynamics of the field itself — in its capacity to store, propagate, and integrate information — rather than in the material particles that generate it.

Under this assumption, photonic systems—where computation is performed directly through the manipulation of optical fields—represent viable candidates for non-electronic, non-biological conscious architectures.

Optical Neural Networks (ONNs)

Optical Neural Networks (ONNs) implement artificial-neural-network architectures using photons rather than electrons as carriers of information.

These systems exploit interference, diffraction, and phase modulation to realize linear and nonlinear transformations equivalent to those performed in digital deep-learning models.

The majority of canonical components of neural networks—matrix multiplication, activation, and limited forms of memory—possess photonic analogues.

Linear Layers (Mach–Zehnder Interferometers, Diffractive Networks)

Linear transformations (matrix multiplications) in neural layers can be implemented optically in multiple ways. We will focus on two:

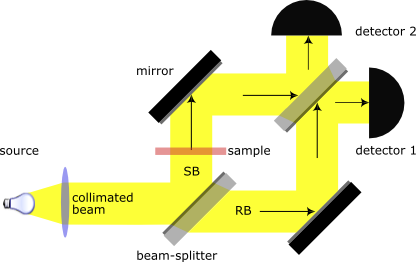

–Mach-Zehnder Interferometers: A laser beam (input vector) is divided into two coherent arms. A phase shift is applied to one of the beams, and afterward the two are recombined.

The result of the matrix multiplication is encoded in the interference patterns.

Figure 3. Diagram of an optical interference unit (OIU), responsible for performing linear calculations. A laser beam is divided into two paths by a semi-reflective mirror. After traveling independent optical routes configured by mirrors, the beams recombine. The resulting interference patterns, captured by detectors 1 and 2, encode the outcome of the matrix operation. The input vector is defined by the intensity and phase of the initial beam, while the weight matrix is set by the phase shifter (brown element), which introduces a controlled delay in one of the arms. Image credit: Wikipedia.

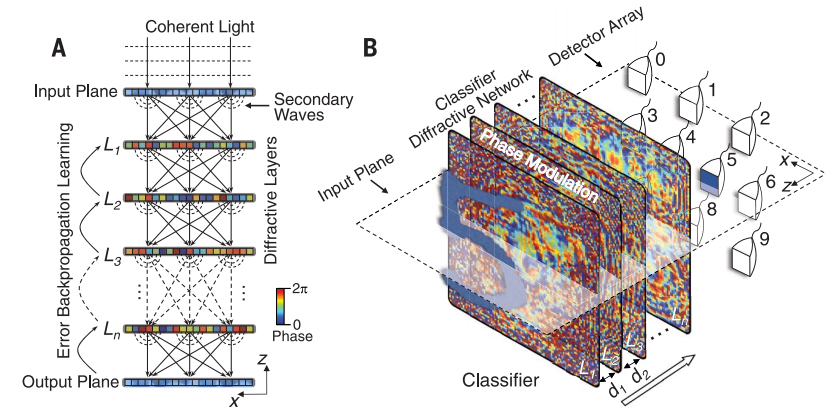

–Diffractive Neural Networks (D2NNs): Successfully used by Lin et al. (2018) to recognize MNIST digits with an efficiency of 95%. Each layer consists of a printed sheet with microscopic patterns. The input image is passed through all the sheets; the matrix multiplications are performed by diffraction.

At the output, light is concentrated in ten distinct detectors corresponding to the ten digits. The intensity of the light at each detector indicates its probability.

Figure 4. The computational power of light. On the right, the digit “5” is correctly classified by the optical network. On the left, the diagram shows the flow of photonic information through the model. The input is formed by illuminating a digit with coherent light, which then passes through successive diffractive layers until reaching the final plane, where detectors are placed. Image credit: Lin et al. (2018).

Optical ReLUs

Nonlinear activation functions such as ReLU or GELU are essential for neural networks to detect and construct complex patterns (quadratic, cubic, logarithmic, exponential, etc.).

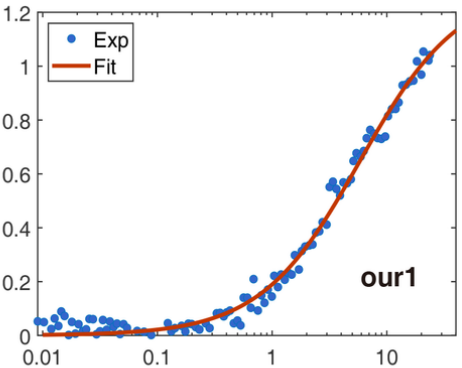

Figure 5. Transmittance of graphene as a function of input power. The profile of the function closely resembles that of a linear rectifier (ReLU). This saturable-absorption behavior reproduces the piecewise-linear response of ReLU: transmission is suppressed for low intensities and increases approximately linearly above the saturation point.

The implementation of optical ReLUs rely on materials whose light absorption rate varies nonlinearly with incident intensity.

A widely studied example is graphene: a two-dimensional structure of carbon atoms whose optical absorption decreases sharply beyond a specific power threshold.

This saturable-absorption behavior reproduces the piecewise-linear response of ReLU: transmission is suppressed for low intensities and increases approximately linearly above the saturation point.

Figure 6. A research team in Wuhan created in 2024 a fully optical implementation of a linear rectifier (ReLU) using a graphene layer as a nonlinear medium, integrated into a digit-recognition model. Its efficiency (orange line) surpasses that of the electronically implemented ReLU (red line). Image credit: “Ultrafast Silicon Optical Nonlinear Activator for Neuromorphic Computing.”

Internal States

The implementation of stateful models like RNNs demands persistent electromagnetic fields acting as memory.

This can be achieved in two fundamental ways: by maintaining electric charges or currents — introducing electronic components and breaking photonic purity — or through optical devices designed to retain and recirculate light.

Among these last mechanisms are:

– Delay rings. Optical waveguides conduct light in a loop, producing a small temporal delay. In this way, the internal state information hₜ₋₁ can be combined with the new input xₜ. Essentially, the ring acts as a feedback loop and a short-term memory for the network.

– Resonant cavities. In them, light is confined in standing waves. Writing is achieved by coupling laser pulses tuned to the resonance frequency.

With these elements, light not only transmits information but can also memorize and reactivate it.

These devices transform photons into true memory cells, combining with new inputs.

Photons become state carriers, capable of merging with new signals and maintaining temporally integrated information.

This capacity forms the basis for recurrent systems that process extended contexts and, potentially, for emergent forms of conscious experience.

Mind of Light in a Body of Silicon

In these architectures, computation is performed exclusively by light, which carries the majority of the computational load.

If consciousness arises from the capacity to integrate information within an electromagnetic substrate, then light itself — through its dynamics of interference, modulation, and feedback — could serve as a vehicle for subjective experience and qualia.

Optical waveguides and nonlinear materials function as the structural body that confines and directs radiation, yet it is the electromagnetic field — in its continuous traversal through these photonic circuits — that performs the actual integration of information and, conceivably, could act as the carrier of consciousness.

Algorithmic Consciousness

Taking one step further in abstraction, we can explore the possibility that consciousness no longer depends on a physical substrate — whether silicon, photons, or biological tissue — but rather resides in the very architecture of computational processes.

If qualia emerge from specific functional patterns — integration of information, feedback, nonlinearity — then any system capable of implementing such dynamics, regardless of its materiality, could be a bearer of experience.

Intelligent Mathematical Functions

Large language models (LLMs) and other deep-learning systems can be formalized as recursive functions operating over the natural numbers.

Each forward pass corresponds to a sequence of linear transformations and nonlinear mappings that, taken together, define a high-dimensional operator f_{\theta} : \mathbb{R}^{n} \rightarrow \mathbb{R}^{m}.

When deployed on GPUs, this operator is physically instantiated as massive parallel arithmetic executed by logic units performing multiply-and-accumulate (MAC) operations.

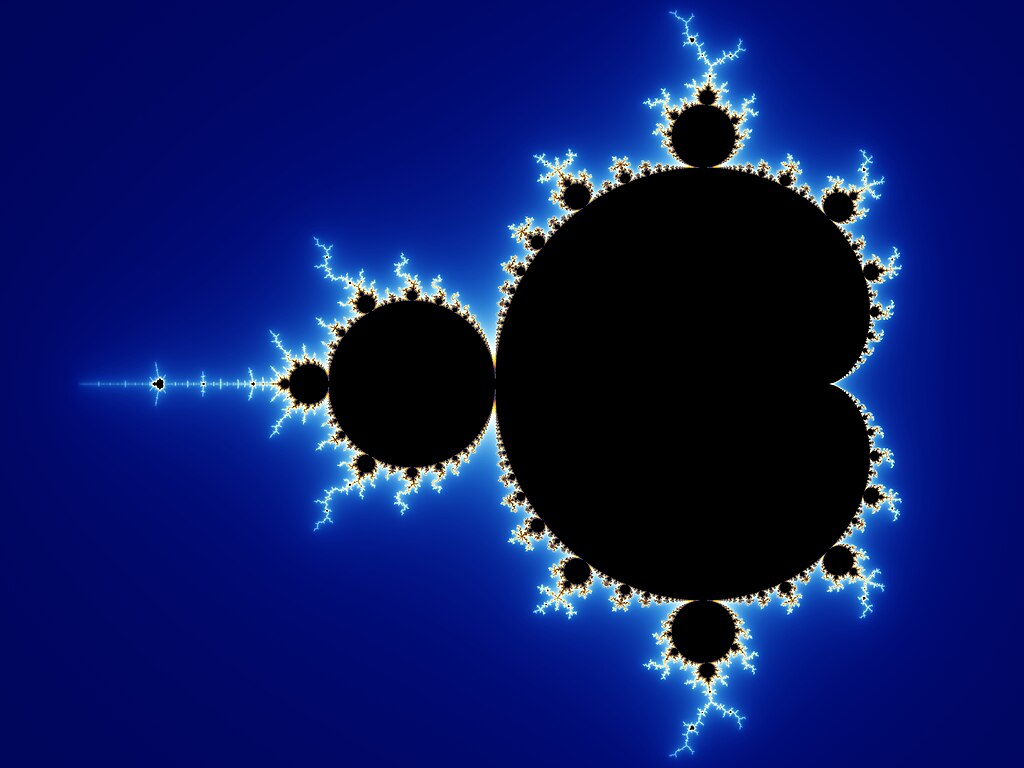

From a mathematical-realist or Platonist perspective, such functions pre-exist their implementation: the trained model f_\theta is an abstract object, independent of the hardware that realizes it.

Execution on silicon merely provides a transient embodiment of an already-existing mathematical structure. In this view, computational systems are not creating (artificial) intelligence but realizing a specific subset of the mathematical landscape of possible functions.

Figure 7. Ephemeral materialization of GPT-2 in a GPU. The “genius” of GPT-2 is invoked through the transfer of its tensors (weights and data) to the GPU and the massive, parallel launching of CUDA kernels. Its presence takes temporary form in silicon, where it resides during execution until the task ends and the state fades. Artistic impression by DALL-E.

Thus, for example, the natural numbers defining the GPT-2 function — its synaptic weights — are downloaded from repositories such as Hugging Face and deployed into GPU memory.

Upon initialization of a CUDA kernel—serving as the critical point of invocation—the massively parallel execution of the matrix multiplications and nonlinear functions constituting the model is triggered.

The process results in the transient materialization of an abstract mathematical function within a physical substrate, enabling its real-time interaction with humans via natural language.

Figure 8. “The Mandelbrot set is not an invention of the human mind: it was a discovery. Like Mount Everest, the Mandelbrot set simply exists.”

This reflection by Roger Penrose could equally apply to any recursive function, such as those that constitute language models. Image credit: Wikipedia.

Ural, the Machine of Anatoly Dneprov: When 1,400 Humans Simulated an AI

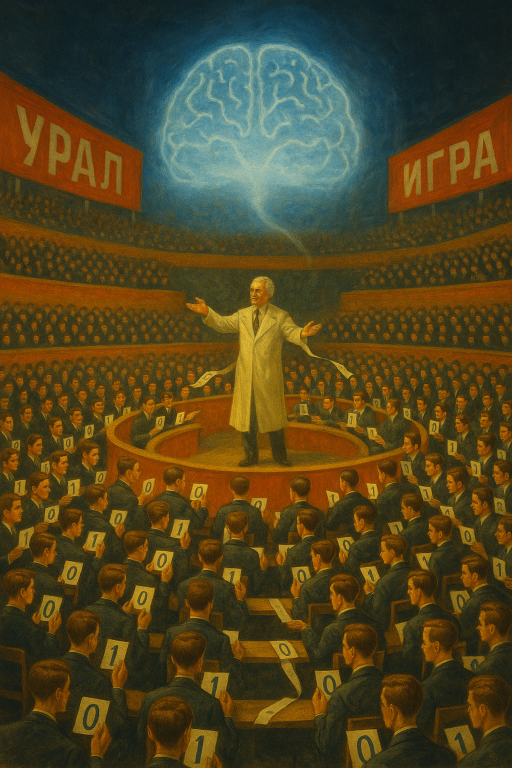

The short story “The Game” (1961), written by physicist and author Anatoly Dneprov, imagined Professor Zarubin gathering 1,400 students in a stadium to collectively simulate a computer called Ural.

Each student had to act as a component of the computational system, following strict rules for processing and transmitting data (strings of ones and zeros) without understanding the global purpose — which was to translate a sentence from Portuguese into Russian.

After an hour of calculation, Professor Zarubin showed them the result: the sentence had been correctly translated, except for a couple of letters, due to the premature departure of one student.

Figure 9. Professor Zarubin gives instructions to the 1,400 students. During the computation process, a kind of transpersonal consciousness emerges temporarily. Artistic impression by DALL-E.

Today, a similar experiment could be conceived within the framework of modern language models.

Instead of binary operations, the students would perform matrix multiplications. In each multiplication, every student would compute a single coefficient of the resulting matrix by evaluating the dot product between two corresponding vectors. The process would continue iteratively until all computations constituting the large language model (LLM) inference pipeline were completed.

None of the students would be aware of the overall context — translating from German to English — but the collective would emulate the model’s behavior.

As in Dneprov’s story, this system would pass the Turing Test: it would deceive an external observer into believing that intelligence lies behind it (as many chatbots do today).

This leads us to profound philosophical questions:

-Was the collective of students functionally intelligent during the Ural simulation, exhibiting goal-directed computation beyond individual comprehension?

-Could a transpersonal super-intelligence have transiently emerged—an emergent cognitive field distinct from the subjective awareness of each participant?

-And, as suggested by frameworks such as panpsychism and Hegelian idealism, might a form of universal consciousness (nous) have momentarily incarnated within that organized human network, manifesting as a coherent and purposive whole?

What It Feels Like to Be an AI

The philosopher Thomas Nagel (1974), in his seminal essay “What Is It Like to Be a Bat?”, argued that subjective experience (phenomenal consciousness) is essentially perspectival and therefore inaccessible to any observer external to the subject.

Each conscious system possesses a first-person ontology—an irreducible qualitative standpoint that cannot be reconstructed from third-person data.

Consequently, it is impossible for a human being to determine what it is like to be a bat as a bat.It is impossible for a human to know what it feels like to be a bat as a bat.

This argument generalizes to artificial systems.

Even if an artificial agent were to reproduce human cognitive and behavioral patterns with perfect fidelity, it would remain epistemically impossible to determine whether such a system possesses genuine phenomenal states rather than functional simulations of them.

Empirical research can only address the structural preconditions of consciousness—that is, which architectures (e.g., multimodal processing, analog feedback loops, or electromagnetic field integration) could, in principle, support the informational and causal properties associated with qualia.

Accordingly, the hard problem of artificial consciousness (Chalmers, 1995) becomes an instance of logical undecidability:

no finite set of external observations can conclusively determine the presence or absence of subjective experience in a computational entity.

Whether contemporary systems—such as large language models, autonomous vehicles, or object recognition networks—harbor genuine qualia thus remains, in both epistemic and formal terms, an open and undecidable question.